Executive Summary

It is very difficult to understand what is going on inside today’s Artificial Neural Networks (ANNs). AI, and GenAI in particular, has become a black box.

Instead of trying to decipher how it works or trying to finetune an LLM altogether, we should “parent the AI”, guide it to work on what we want and, more crucially, the way we want it to work. This means applying AI to carefully selected use cases in very specific domains with well-defined rules and clear terminology and vocabulary. This is done through detailed ontological modelling of that domain curated by Subject Matter Experts (SMEs). This domain model will translate into specific concepts and vocabulary (terminology) that will be used in the prompts that instruct the AI. This type of data and knowledge curation ascertains the human-in-the-loop requirement.

I call this approach Language Ops or LangOps for short and it leverages the fact that there is no language without meaning and – more importantly – no meaning unless it is expressed through language.

The LangOps approach of integrating domain-specific knowledge in prompt templates is the difference between a generic out-of-the-box LLM and a customised domain- and use case-specific AI tool without needing to build a domain-specific LLM from scratch. It creates a type of evolved, highly efficient Expert System or well-behaved, mature ANN by turning the AI black box into a more transparent box.

In this article, I explain the LangOps AI Design process and provide detailed examples of applying the different steps of the LangOps methodology to design and start implementing the use cases of two client BUs (HR and Finance).

Finally, I recommend a number of questions that you need to ask, when you are considering leveraging or building an AI system. The questions, just like the LangOps AI design methodology itself, will help you move from AI vision to AI implementation for controlled, transparent, explainable, compliant, ethical, and responsible AI applications.

AI Is Now a “Black Box”

Nowadays every organisation and Government is exploring how to leverage AI and Generative AI to gain efficiencies and generate positive impact to its customers and citizens. At the same time, local, national and international laws and regulations are increasingly imposing a transparency requirement on AI. AI applications need to operate in a predictable and controllable manner and its creators or owners need to be able to explain why a decision was made or not made, or why something went wrong. An example is denying someone insurance cover because of their skin colour or rejecting a job candidate because of their accent. In order to maintain your clients’ trust and your own reputation intact, you need to remain accountable for your AI. It is a two-way interaction: there cannot be accountability without transparency and transparency forces accountability.

The problem is that it is very difficult to understand what is going on inside today’s Artificial Neural Networks (ANNs). This has always been the case, from the time I was experimenting with my tiny 3-layer ANN in the early ‘90s, but even more so now. Nowadays there are hundreds of hidden layers (compared to the one I had) with hundreds of thousands of units and millions of connections among them. The combinatorics increase exponentially with the size of the ANN, which renders the whole process even more obscure and unfathomable.

So what are we to do?

We Need to “Parent” AI to Make It Behave (Responsibly)

Instead of trying to decipher how an ANN works or trying to finetune an LLM altogether, we should “parent the AI”, educate and guide it to work on what we want and, more crucially, the way we want it to work. This means applying AI to carefully selected use cases in very specific domains with well-defined rules and clear terminology and vocabulary. By selecting and defining the specific domain world in which the given AI application should and is allowed to reason and act upon, we get rid of the need to specify and model the whole of the human experience, a massive and ultimately impossible and rather pointless task. By controlling the definition of objects and their properties, actors and processes, relationships and interdependencies among those, rules and decision points for our specific world, we can both prescribe and predict, and thus also explain, how the AI uses the corresponding terms, how it reasons about them and how it behaves in that world and why. This is done through detailed ontological modelling of that domain curated by human experts and Subject Matter Experts (SMEs). This domain model will translate into specific concepts and vocabulary (terminology) that will be used in the prompts that instruct the AI. This type of data and knowledge curation ascertains the human-in-the-loop requirement.

The Solution Is Language Ops (LangOps)

By integrating Knowledge Engineering with Language and Prompt Design and Engineering, we bring together language and meaning, merging Linguistics with Cognitive Science and Machine Learning. I call this approach Language Ops or LangOps for short and it leverages the fact that there is no language without meaning and – more importantly – no meaning unless it is expressed through language. Similarly, we cannot define a domain outside of language, we have to use words to “model” it. This is exactly where LLMs fail us. They use sophisticated words, but have nothing to “express”. LLMs don’t work with meaning, they just bring phrases together that statistically and historically belong or just appear together.

The interdependence between language and meaning is something that all Linguists, like myself, know. It is, however, something that people forget now that everybody is doing Prompt Engineering to some degree; using Generative AI in everyday life has made us all into Prompt Engineers in some form. Nevertheless, Linguists, Computational Linguists and professionals already working with language (e.g. Conversation Designers and Copywriters) are the only ones that can write surgically precise, effective and impactful prompts. The choice of words almost always determines whether you get a remotely relevant or the best result. In an enterprise environment, where you write prompts for your customer’s AI applications, it often makes the difference between a safe and reliable tool, and a cybersecurity threat. The precise selection of words and phrases in a prompt is also crucial when defining a domain-specific task, as well as controlling what its output should be. Thus, precision affects accuracy; effectiveness affects relevance; usability and user experience affect impact.

The LangOps approach of integrating domain-specific knowledge in prompt templates is the difference between a generic out-of-the-box LLM and a customised domain- and use case-specific AI tool without needing to build a domain-specific LLM from scratch. It creates a type of evolved, highly efficient Expert System or well-behaved, mature ANN by turning the AI black box into a more transparent box.

Controlling AI Through LangOps

We have seen why AI and GenAI, in particular, applications need careful design. The LangOps methodology to Designing and developing AI and GenAI applications involves the following steps:

AI Use Case Selection Ensures Impactful & Responsible Applications

Identifying and prioritizing high-impact applications that can solve specific business challenges today and selecting the optimal technology stack to implement them (AI, Generative AI or traditional Machine Learning). This happens alongside the domain / BU SMEs, who also have visibility into medium- and long-term needs and can futureproof any decisions.

Domain Modeling Curation Provides the Context for Explainability & Accountability

Ensuring relevance, accuracy, transparency, compliance and control by modelling the domain vocabulary and logic in close collaboration with domain SMEs (who are the human-in-the-loop).

Prompt Design & Engineering Controls & Curates the AI Output

Designing and carefully crafting prompts that integrate a model of the domain and its specific use cases (domain ontology) in order to guide and control the AI response. Testing prompts against different AI tools and AI models for accuracy, dependability and safety.

Plug-And-Play Prompt Template Libraries Ensure Reusability and Compliance

Compiling, maintaining, modularizing and reusing standardized plug-and-play prompt templates for efficient, streamlined, secure and equitable Prompt Design and Prompt Engineering across different AI models and use cases.

Prompt Templates Ensure Customization

Efficient tailoring of standardized prompt template and prompt segment collections to different domains and use cases for organization-specific personalized GenAI-powered interactions.

Conversational Experience Design for User-Centricity

Crafting best-in-class effective, intuitive, engaging, brand-aligned, personalized human-AI language interface and end-to-end experiences that allow users to naturally converse with and act on BU data.

LangOps in Practice: 2 Use Cases

So what does this look like in practice?

Below is a detailed example of applying the different steps of the LangOps methodology to design and start implementing the use cases of two client BUs (HR and Finance).

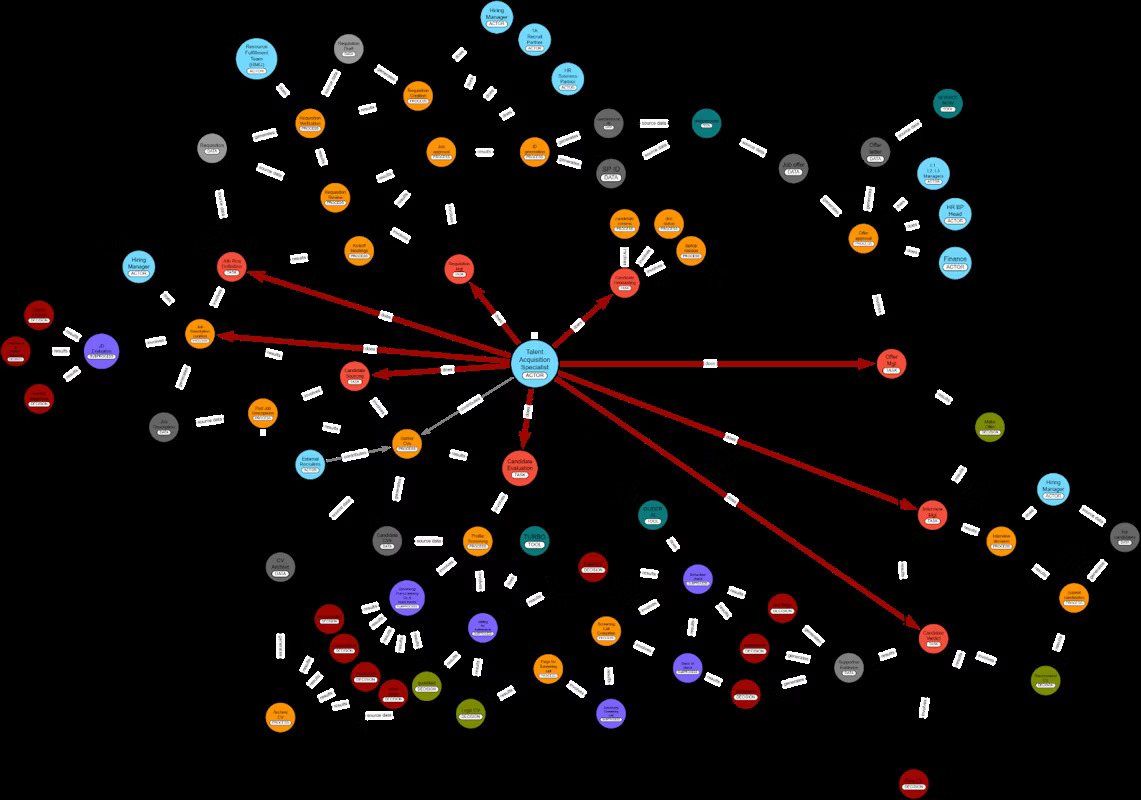

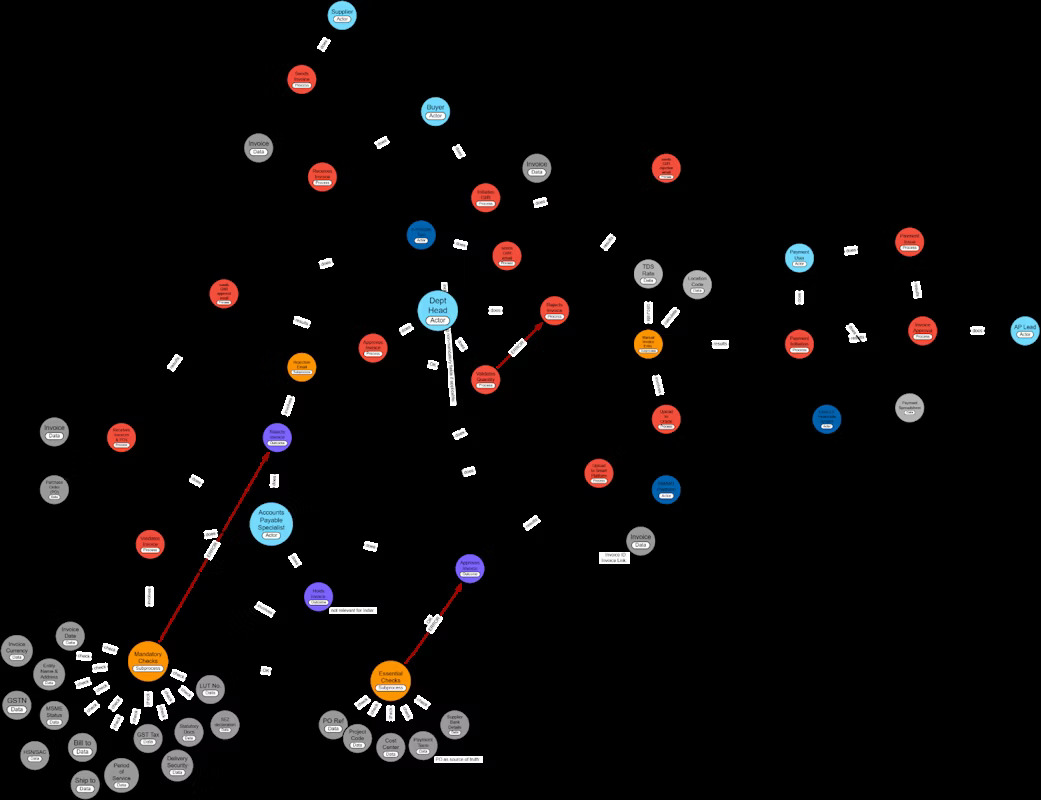

Domain Ontology Modeling

From the start, we needed a clearer understanding and more precise representation of each BU’s workflow (the actors, processes, tasks, data types, decision points, rules and outcomes). This would enable us to effectively translate them into a domain ontology for each BU, a complex knowledge graph with the core concepts, dependencies, rules and outcomes that captures the domain logic necessary for more effective prompt design and ultimately more relevant, accurate and transparent AI responses.

What It Is

The goal of the domain model (the ontology, the knowledge graph) is to establish a common vocabulary between the BU SMEs and the AI application designers in order to capture the domain logic. The application designers then “plug” or integrate this vocabulary and logic into the prompts (the wheels that make the AI turn).

A domain ontology consists in:

- the main actors (who)

- the main processes and actions (what – tasks, steps)

- the domain logic (how & when – conditions, rules and dependencies, outcomes)

- the domain data & tools (what & how – input and output data, tools supporting the workflow)

What Form It Takes

A domain knowledge graph is a graphical representation of the ontology in terms of nodes and arcs among the nodes indicating relationships and dependencies. Knowledge graphs can be created with tools, such as Arrows and translated into Cypher and JSON code and readily integrated in prompts.

A different way to represent an ontology is through tables listing the core nodes / concepts and the different values or instantiations they take. This can be easily done in an Excel spreadsheet. Although not visual, it can help capture more details and even see relationships and dependencies more clearly.

How We Arrive at It

We held a number of 1-2-1 interviews with each SME of each BU to capture their view of their current workflow, the different processes and steps involved, including any painpoints, challenges and any specific use cases they wish could be automated through AI. On the basis of those interviews, we constructed a first draft of each (separate) ontology, a visual knowledge graph for the HR BU and one for the Finance BU, focusing on a specific set of use cases for each: Talent Acquisition and Accounts Payable, respectively. We presented the 2 ontologies to the SMEs in the course of two 1.5-hour- long workshops. During the workshops we validated and, where necessary, corrected or refined the various components of the ontology (actors, tasks, processes, dependencies, decision points, input and output data and the domain logic that will form the instructions to the AI agent(s)).

What It Enables

Validating and refining the domain ontology ensures a grounded discussion with the BU SMEs over the optimal workflow aspects to automate through AI, as well as a clear understanding of how to automate them through targeted prompt design and engineering. Incorporating the domain model and the associated knowledge graph into the prompt templates is currently one of the best ways to ensure transparency of AI reasoning and action, which in turns facilitates explainability and accountability, 3 of the core principles of AI Design.

Here are screenshots of the 2 separate mini-ontologies, one for HR (Talent Acquisition) and one for Finance (Accounts Payable).

Example Ontologies

Prompt Design & Language Engineering

What It Is

After each BU’s workflow has been clearly defined in terms of a domain model or ontology, the associated common vocabulary and domain logic will be incorporated in prompt templates that detail AI instructions.

There are 2 types of prompt: System prompts and User prompts:

System Prompts or instruction prompts are the backbone of AI: ChatGPT, Gemini, Copilot, all use their own system prompts. They are usually 2+ pages long and are written by Prompt Designers / Language Engineers. They contain instructions on how the AI should behave (reason, talk and act), e.g. in a responsible, ethical and fair manner, avoiding inaccuracies, misinformation, bias, rudeness or aggression. They also define the specific task of the AI application in terms of domain-specific concepts, rules, data and outcomes, as above.

User Prompts are the public interface of AI and work on top of the system prompts: they are written by SMEs and users who are using the AI application and can be as short as a sentence or even an incomplete phrase (e.g. “List all current active roles” or “What about the next best match?”).

System instructions and user prompts taken together are part of implementing an AI application: they prescribe AI reasoning and guide AI action.

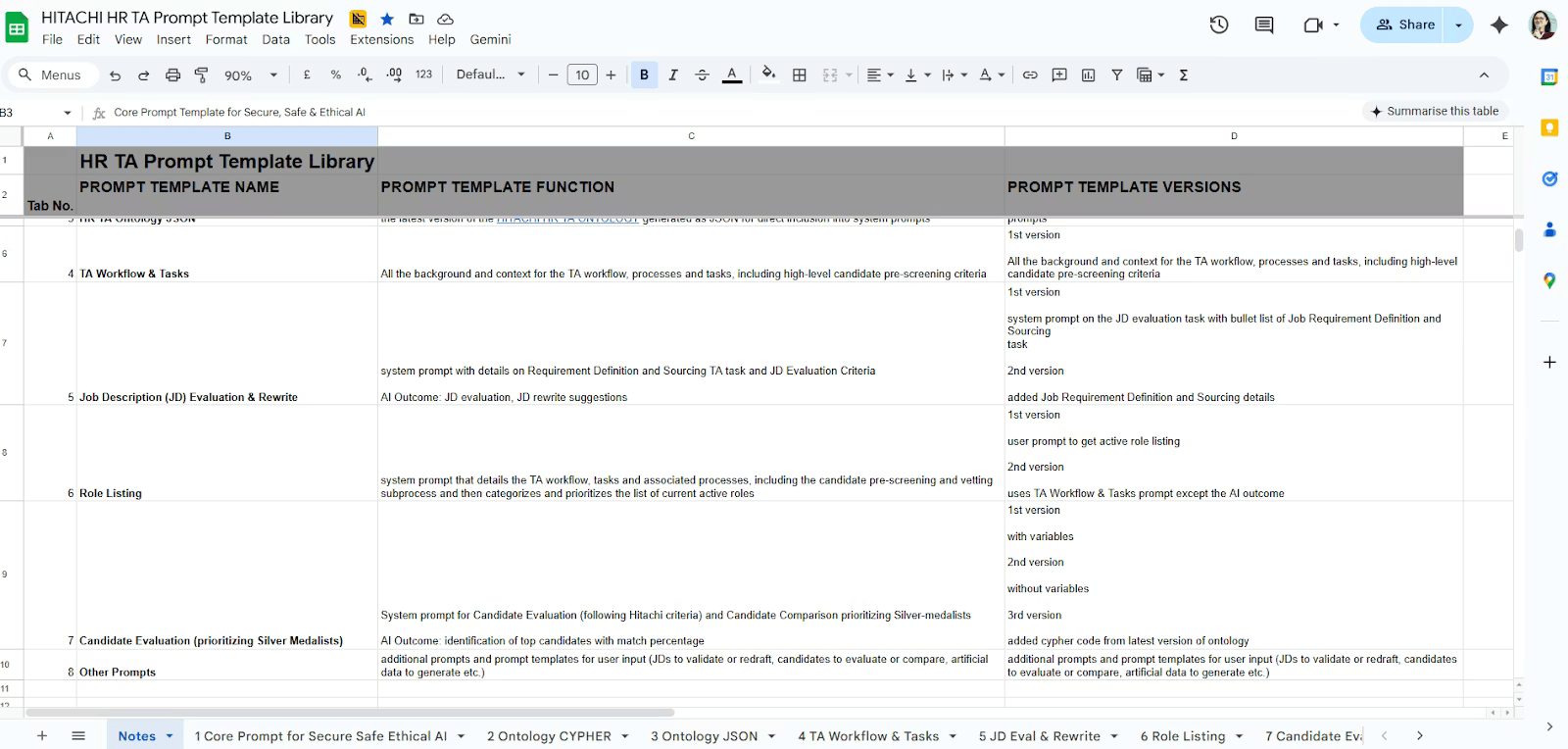

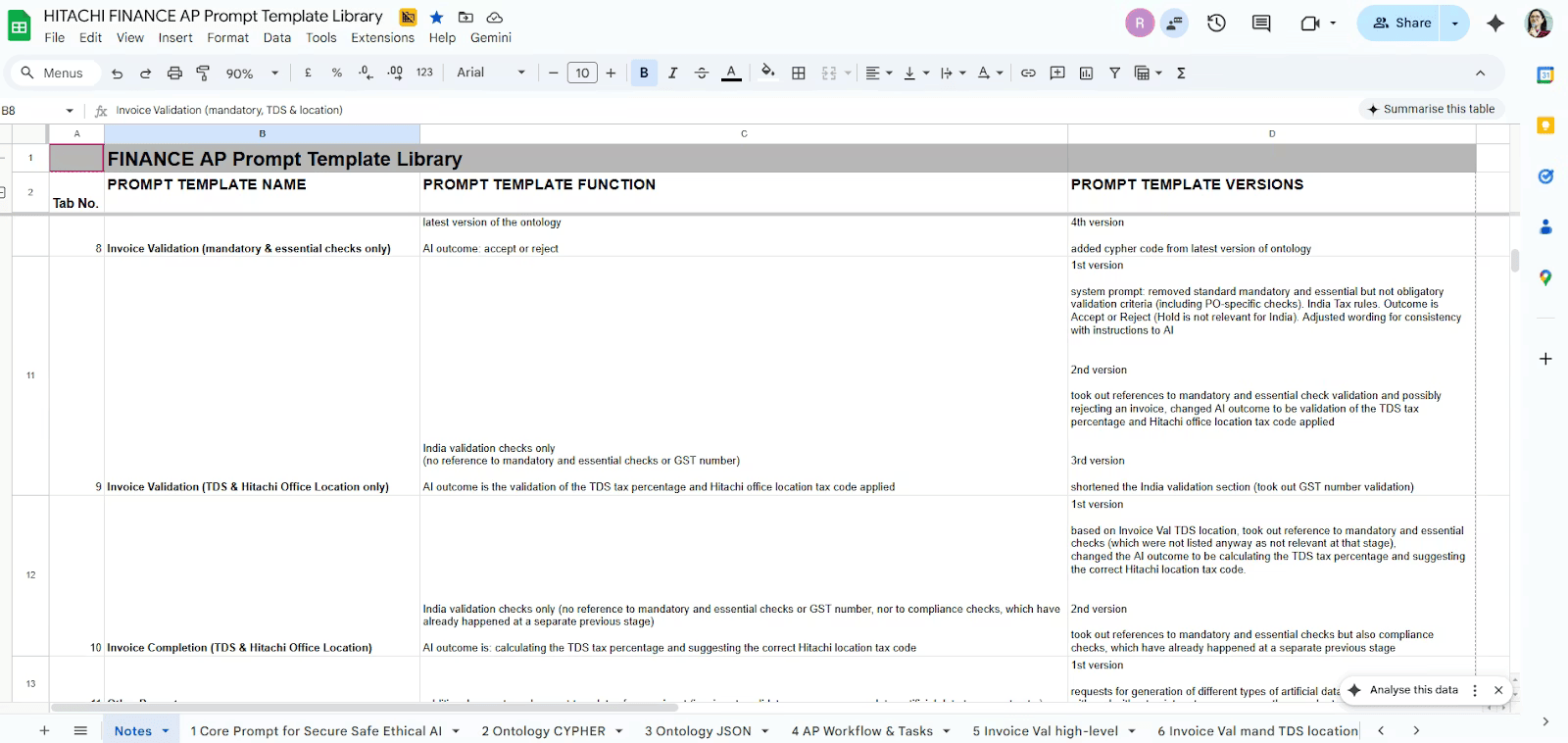

What Form It Takes

Prompts and prompt templates are delivered as an Excel Spreadsheet, one for each BU. Each dedicated domain-specific Prompt Template Library contains modular, reusable and customizable prompt templates for the use cases identified and prioritised and includes guidelines on how to use them.

Prompt templates were constructed that cover the following use cases among others:

– Templates for describing the whole workflow, processes and tasks, for each BU

– The CYPHER and JSON codes expressing the Ontology graph

– Core Prompt Template for Safe & Ethical AI with a set of guidelines and safeguards for Safe, Secure, Ethical, Fair and Compliant AI reasoning and AI output

– Templates for the desired use cases (Job Description Evaluation & rewriting, Candidate Evaluation, invoice validation and completion)

– Example User prompts for RAG to provide job descriptions, candidate profiles or invoices, but also to generate artificial test data

Below are screenshots of the 2 Prompt Libraries we delivered.

Example Prompt Libraries

How to Use It

Use prompt templates as is or customize to changing SME preferences, Department Guidelines or related BUs.

What It Enables

The role of the designed prompts is to define instructions, rules and logic for one or, ultimately more, AI agents which will automate the BU workflow tasks that cause the pain points already identified.

At the same time, the designed prompts can function as plug-and-play templates with modular reusable prompt segments that can be customized and personalized to specific Departments, BUs, domains, user types and even SME preferences.

How We Arrive at It

On the basis of the ontologies we had created and validated, we constructed prompt templates for the identified use cases for each BU, which we tested using an enterprise-grade, company approved AI tool, such as Google Gemini. Due to the lack of test data or the BU’s unwillingness to share any, we generated test data using the same AI tool (e.g. fake invoices, mock job descriptions and candidate profiles). This avoids the need to share and potentially compromise sensitive data, without doing away with comprehensive testing of the relevance, accuracy and compliance of the prompts.

During the workshops with the SMEs, we presented the prompt templates we had created and demonstrated how they would work using artificial test data we had prepared. The SMEs validated the prompts’ relevance and value and provided ideas and requests for alternative prompts and prompt templates that we added to the respective Prompt Libraries that we ultimately delivered.

The LangOps Design Process Flow

Here is the whole LangOps AI Design process in summary:

- We build the BU domain model with the SMEs (Ontology Knowledge Graph using e.g. Arrows.app)

- We export the ontology as CYPHER code

- We include this Cypher code in a prompt template and add to it specific instructions for the task at hand

- We also include the guidelines for Safe & Ethical AI from the Core Prompt Template

- We could reuse a template with similar function and customize that

- We can test the resulting prompt template using the BU’s approved AI tool with Enterprise licence (e.g. Google Gemini)

- We finetune the prompt template depending on the results we get

Questions to Ask When You Consider an AI Build

We have seen how AI has gone from hand-crafted expert systems to supervised statistics-based pattern matching machines, to the current unsupervised LLM black boxes, which learn and simulate patterns incredibly well, and back again to AI and GenAI desperately needing human curation and supervision. This is the reason why AI applications in particular need to be carefully designed, to bring transparency and, hence, accountability back.

So when you are considering leveraging or building an AI system, you have to ask the following questions:

- Have you carefully selected the use case(s)? Are they valuable, impactful and futureproof? What impact will they have on your customers and your employees in the short- and medium-term?

- Have you built a detailed model of the domain in collaboration with the SMEs? Have you captured the actors, processes, tasks, logic, rules, decision points and necessary data?

- Have you translated the domain model into an ontology that represents the domain logic and a shared vocabulary for the tasks? Was the ontology created by someone with a Linguistics, Cognitive Science or Knowledge modelling background? Have you validated the ontology with the SMEs?

- Have you embedded the domain model and vocabulary into modular, reusable and customizable prompt templates? Were they written by a Linguist, Computational Linguist or Conversational Experience Designer? Do they cover all the necessary use cases? Do they include guidelines for safe, accurate, responsible, ethical, fair AI processing and AI output?

- Have you tested all the prompt templates with real-world or realistic test data? Do they fulfil the prescribed purpose and use case?

- Have you tested all the prompt templates with different AI models or at least the models that will be used in production?

- Are you maintaining a Library of plug-and-play customizable prompt templates for use with new use cases for new BUs, Departments or changing requirements?

The above questions, just like the LangOps AI design methodology itself, will help you move from AI vision to AI implementation for controlled, transparent, explainable, compliant, ethical, and responsible AI applications.