Artificial intelligence promises to change industries and redefine competitive advantage. Yet the reality feels more sobering: AI succeeds only as far as the data that powers it. Even when organizations invest heavily in AI initiatives, they often find that their data fails to serve their intended purpose.

Executives often say data is currency. In practice, however, data for AI behaves less like a mineral and more like a living system. It’s fragile, contextual, and susceptible to both growth and decay.

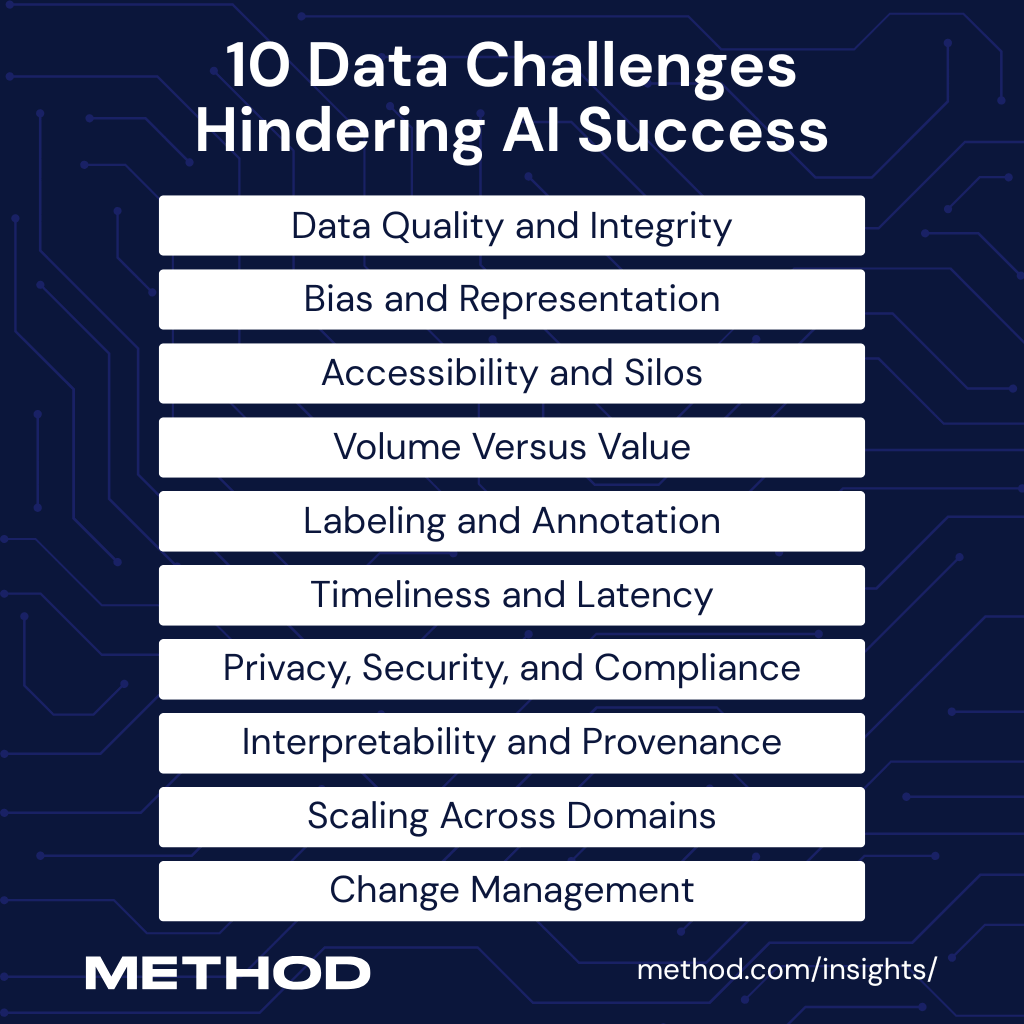

To understand why many AI projects fail to deliver quality, measurability, and clarity, we must confront the recurring data challenges that hinder their success.

1. Data Quality and Integrity

AI systems don’t just need “clean” data. They need representative, consistent, and trustworthy data pipelines.

In healthcare, for example, clinicians record blood pressure in three different formats: “120/80,” “120-80,” or “120 over 80.” Preprocessing can standardize this inconsistency, but the steeper challenges lie deeper: missing data from underserved populations, biased clinical notes shaped by local practices, or outdated records that don’t reflect a patient’s current status.

IBM’s Watson for Oncology illustrates the danger. In 2018, internal documents revealed that the system recommended unsafe cancer treatments, as IBM trained the system using only a few synthetic cancer cases rather than real-world patient data. The failure came not from the algorithms but from flawed training data.

Unlike formatting issues, these aren’t trivial fixes. They reflect structural gaps in how data is collected and curated.

If marginalized groups are underrepresented in electronic health records, no amount of cleaning will create the missing data. And when training relies on synthetic or proxy datasets, as with IBM’s Watson for Oncology in 2018, it can result in dangerous recommendations — not because the algorithm is poorly designed, but since the underlying data doesn’t capture real-world complexity.

2. Bias and Representation

AI reflects the biases embedded in its training data.

Amazon encountered this issue when it developed an AI-powered recruitment engine. The system penalized resumes containing the word “women’s” and even downgraded candidates from women’s colleges. Historical data dominated by men had shaped the model.

Credit scoring systems trained on biased lending data often exclude marginalized groups, while healthcare models that underrepresent minority populations underperform when applied to those same groups. Each failure can be traced back to what organizations choose to collect and what they leave out.

Mitigating bias in AI isn’t just about debiasing algorithms after the fact. It requires deliberate choices about what data is collected, whose experiences are represented, and how historical inequities are accounted for. Otherwise, the AI encodes and amplifies existing discrimination.

3. Accessibility and Silos

Many enterprises scatter their data across disconnected systems. Sales teams log information in Salesforce, finance relies on SAP, and operations turn to Oracle. Data scientists then struggle to build a coherent view from these fragmented pieces.

The challenge isn’t limited to technology. Legacy systems, governance restrictions, and internal politics reinforce silos that keep information locked away. A 2025 survey found that 83 percent of organizations report data literacy challenges, while only 28 percent have achieved adequate levels of data literacy across their workforce.

Without accessible and comprehensible data, AI initiatives stall before they can scale.

Overcoming data silos doesn’t simply mean integrating systems. It requires breaking down organizational barriers, improving data literacy, and making information both accessible and interpretable. Otherwise, AI efforts remain fragmented and fail to scale.

4. Volume Versus Value

Leaders often confuse data volume with progress. They celebrate petabytes stored in data lakes as teams quietly wonder how to extract actual value. Vanity metrics such as “terabytes ingested per day” disguise the lack of insight.

Netflix confronted this while developing its early recommendation systems. Instead of hoarding more clicks, the company captured richer signals, such as time-of-day viewing, completion rates, and cross-genre patterns. These value-laden features improved recommendations and kept users engaged.

AI progress isn’t measured by how much data an organization collects, but by whether it captures the right signals. Without shifting from volume to value, data initiatives risk becoming expensive storage projects rather than engines of insight.

5. Labeling and Annotation

Supervised learning requires labeled examples, and labeling consumes both time and money.

Autonomous vehicle companies like Waymo face massive data annotation demands. Their systems must distinguish hundreds of different object types, each appearing in diverse conditions and environments. These systems rely on vast, granular datasets to understand road scenes in real time.

Smaller organizations that want to use computer vision for inventory management or defect detection quickly realize that labeling costs can stall projects before they scale.

High labeling costs create a bottleneck that money alone can’t always solve. When only well-resourced organizations can afford the annotation scale required, innovation risks becoming concentrated in a few players. For smaller teams, projects stall not because the algorithms lack potential, but because the training data never reaches the required quality or volume.

This imbalance doesn’t just slow adoption. It limits which organizations can scale their ideas.

6. Timeliness and Latency

Data ages quickly. A recommendation model trained on last year’s interactions delivers yesterday’s preferences.

Financial firms understand this intuitively: They design AI systems that react to market signals within milliseconds. Retailers respond to what a customer just clicked, not to a purchase from last quarter, while hospitals monitor real-time vitals to detect sepsis before it turns fatal. When data lags, AI produces outputs that arrive too late to be useful or even safe.

The challenge isn’t collecting data. It’s keeping the data current. Models built on outdated inputs risk irrelevance in fast-moving environments such as markets, retail, or healthcare. No matter how advanced the algorithm, outdated data leads to outdated decisions.

7. Privacy, Security, and Compliance

As AI’s influence grows, so does the risk of misusing sensitive data.

In 2015, Google’s DeepMind received access to the health records of 1.6 million NHS patients from the Royal Free London NHS Foundation Trust. In July 2017, the Information Commissioner’s Office ruled that the Trust had violated the UK’s Data Protection Act by failing to inform patients adequately and by not demonstrating that the data transfer served a lawful basis.

Regulators instructed the Trust to conduct a privacy impact assessment, improve transparency, and undertake an audit.

Now, organizations must navigate regulations such as GDPR in Europe, CCPA in California, and HIPAA throughout the United States. Regulators can impose heavy fines, and customers can quickly lose trust. Leaders who neglect privacy and security expose their organizations to legal and reputational risks.

Privacy lapses aren’t just compliance issues. They erode the trust that makes data sharing possible in the first place. Once patients, customers, or partners feel their information has been mishandled, rebuilding confidence is harder than avoiding the breach in the first place.

Strong privacy practices aren’t optional guardrails. They’re foundational to sustaining AI’s legitimacy and long-term adoption.

8. Interpretability and Provenance

Stakeholders don’t just expect predictions. They demand explanations. Organizations must know why a model reached a decision and trace the data lineage behind it.

In finance, regulators require banks to explain why they deny a loan. When a model trained on opaque or poorly documented data makes that call, the bank struggles to defend its reasoning. A lack of provenance turns AI into a liability rather than an asset.

The European Central Bank, for example, has stressed the importance of explainability in AI credit risk models, warning that institutions must maintain transparency and governance over their data pipelines to avoid compliance breaches.

Without transparency, AI decisions can’t be trusted by regulators, customers, or even the organizations deploying them. Black-box outcomes may expedite predictions, but they slow down accountability.

In high-stakes domains like finance, explainability isn’t a feature to add later. It’s a prerequisite for using AI responsibly.

9. Scaling Across Domains

AI pilots often succeed in isolated contexts but fail when organizations scale them.

For instance, a sales model trained on European data tends to underperform in the United States, as customer behavior, regulations, and product offerings differ. Hospitals encounter a similar issue: a model developed for one facility may not generalize well to another, as coding practices, patient demographics, and clinical workflows vary.

Researchers at the University of Michigan revealed this issue in their evaluation of the widely used Epic Sepsis Model. The model correctly identified only 63 percent of sepsis cases, well below the accuracy its developers claimed, and missed two out of every three patients who were actually septic.

An AI system that thrives in a controlled setting may falter when applied to diverse environments. This is exactly why cross-domain validation is necessary for building resilient AI.

Scaling isn’t just a technical hurdle. It’s a test of whether models reflect the messy diversity of real-world contexts. An algorithm that performs well in a pilot but collapses in practice doesn’t just waste investment; it undermines confidence in AI as a whole.

Without rigorous validation across settings, organizations risk deploying tools that look promising in the lab but fail where it matters most.

10. Change Management

Even the most advanced AI models can collapse when their environment changes. The COVID-19 pandemic made this painfully clear: many organizations deployed AI decision tools that failed because their training data no longer reflected new patterns in consumer behavior or patient flows. These tools quickly became obsolete.

A compelling example comes from MIT Technology Review, which reported that hundreds of AI systems developed during the pandemic failed to provide effective assistance in real-world situations. Many of these tools struggled due to data access issues, standardization problems, and faulty assumptions, rendering them ineffective during a crisis when speed and adaptability were most crucial.

Organizations that fail to retrain and recalibrate their models as conditions evolve risk deploying brittle systems. AI can’t remain static. Leaders must prepare for changing contexts if they expect AI systems to stay relevant.

Accuracy at one point in time doesn’t guarantee resilience when conditions change. AI that can’t adapt quickly becomes a liability in rapidly changing environments, such as pandemics, markets, or supply chains.

Continuous retraining and recalibration aren’t optional extras. They’re the only way to keep models useful when the world refuses to stand still.

The Physiology of Data Failure

These challenges interact and reinforce one another. Poor quality data amplifies bias. Silos make access even harder. Weak provenance erodes trust. Together, they create the physiology of an unhealthy organization, characterized by brittle bones, underdeveloped muscles, and fraying connective tissue.

To build AI that delivers, leaders must nurture strong data foundations. They must move beyond checklists and treat data readiness as a living physiology, having the right data, tools, culture, and processes in place to execute a data strategy effectively.

This means seeing data as an evolving and integrated system built to adapt, learn, and thrive.