“Healthcare” is a loaded word.

The average American spends 12% of their day focused on their health (more if they’re disabled, retired, or suffer from a chronic condition). The fitness and wellness industry was recently valued at $6.8 trillion, and nearly 1 million physicians practice in the United States as of 2024. By every measure, the investment is enormous.

And yet, for all those resources, a patient’s life expectancy and quality of life may be influenced by a factor as pedestrian as their zip code.

That’s because where you live is just as big a factor in your health as how you live. Your zip code determines your access to healthy food, education, technology, clean air and water, and, above all, hospitals and doctors who are equipped and ready to help when you need them.

But in the era of rapidly closing rural hospitals, socioeconomic disparities, soaring insurance costs, and “Dr. ChatGPT,” the idea of what it means to receive care is shifting. It’s time healthcare organizations stand up and take note.

AI Promises to Democratize Access, But For Who?

The promises of AI are vast and far-reaching, depending on who you ask. Leaner staffing, quicker patient diagnosis, and analytics that strike at the heart of customer wants and needs in ways that are intuitive, personalized, and built to scale, just to name a few.

Amid all the bright lights, though, lies the truth that the rapid pace of AI development is forcing healthcare providers and organizations to pause and address pressing ethical questions in this new frontier.

Efficiency, but at what cost? Intuitive user experiences, but biased toward who? Increased revenue and soaring profits, but who’s left behind?

These questions and more demand a thoughtful, careful evaluation of the current technology environment, including a bit of “crystal ball” peering, using what we currently know about where equity, access, and efficiency intersect, along with emerging data that can show us what pitfalls lie ahead and how to avoid them.

AI stands to reshape organizational operations, the way people connect with each other, personal relationships across sectors and private life, and how we think about institutional trustworthiness and privacy. Leaders bear a responsibility to make the right choices: ones rooted in equity and empathy as they serve their markets and stakeholders.

To understand digital equity, we need to step back and examine the language: both how it informs the current digital divide and the role digital literacy plays in modern society.

What Is the Digital Divide?

Coined in the mid-1990s, the term “digital divide” has become a catch-all phrase referring to the growing gap in access to computers and the internet experienced by large swaths of the United States, and indeed, the global population.

Originally analyzed through the lens of how many American homes had in-home access to affordable telephone service, the term has since broadened to include access to affordable, reliable home internet and tools such as personal laptops and smartphones. Alongside the digital divide, the term digital literacy describes a person’s skill level and awareness to fully use those tools and technology.

For context, as of 2024, the Pew Research Center identified that 5–7% of households in America do not currently have reliable, affordable internet access, and even of those who do, a staggering 13.3% do not have personal computing devices or rely on smartphones alone to access the internet.

Even in zip codes where raw access to reliable broadband isn’t an issue, we see large disparities in digital literacy. The more healthcare moves online, the more a customer’s digital literacy skills can be the difference between success and failure.

Digital literacy encompasses a customer’s ability to:

- Use web and mobile interfaces

- Avoid inadvertent cybersecurity risks

- Understand complex billing and medical disclosure forms and consent

- Think critically about their personal online security

- Understand the role privacy and personalization play in their digital footprint

All of these are factors organizations must grapple with as they meet the rising demand to incorporate AI-first solutions. Failure to do so risks creating a multi-tiered hierarchy of access, further deepening the rift between those who can use digital tools and those who can’t.

Can AI Help Build a Bridge to Better Care?

In no other market is the double-edged sword of AI more apparent. Healthcare as an industry is ripe for both new solutions and critical evaluation.

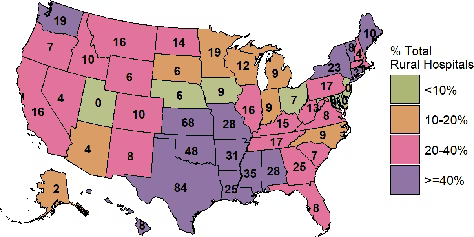

With an alarming one in three rural hospitals at risk of closing (per the latest reporting from the Center for Healthcare Quality and Payment Reform), it’s easy to see how agentic AI solutions and telemedicine could be a ready and welcome source of relief for communities already struggling with access to good healthcare.

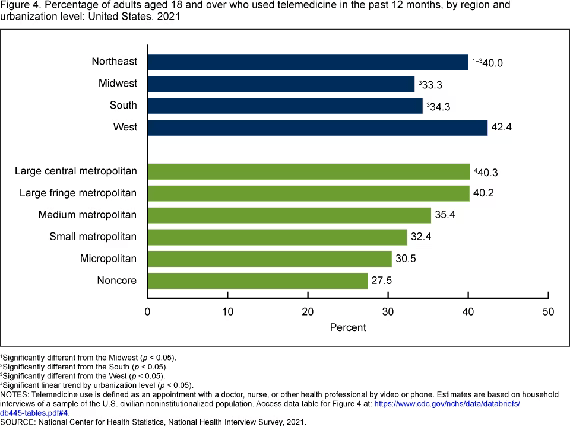

Since 2021, the adoption of telemedicine has steadily increased to nearly 40% within the United States. If we dig into who is taking full advantage of the solutions, however, we can see that in rural communities, where access to doctors and comprehensive hospital care often lags behind that of metropolitan counterparts, the adoption rate is only 27.5%.

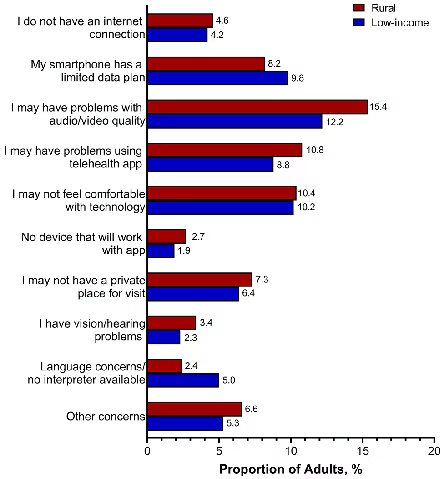

The reason is partially infrastructural: rural areas lack the same distribution of broadband fiber or telecom towers as major cities might. But infrastructure isn’t the whole story.

Education and digital literacy also play a huge role in a prospective patient’s ability to access, understand, work with, and adopt AI technologies. The more dramatically sectors like healthcare push for AI-first solutions, the bigger the risk for major ramifications on their customers’ ability to seek the care they need.

Prevalence of barriers to using telehealth services among rural and low-income adults, weighted to be nationally representative within racial/ethnic groups. Only 57.4% of rural adults and 56.9% of low-income adults reported having no barriers to telehealth use.

Despite clear indications that barriers to telemedicine exist, including limited access to technology and limited awareness of telemedicine as a viable solution for them and their families, a silver lining emerges. Studies show that when rural community members have access to reliable telemedicine services, the outcomes are overwhelmingly positive.

In short, it works.

Intuitive User Experience Is in the Eye of the Beholder

Rural America isn’t the only demographic that faces these access gaps. In 11 states, adults over 65 now outnumber children. And that trend is continuing to rise, according to the latest 2025 census data.

In a society that places a lot of emphasis and spends a lot of money on treating patients after they experience a health event, adding yet another barrier in the form of technology to proactive or responsible treatment feels both questionable and bad for business.

Our older members of society are actively trying to keep up. Recent studies confirm this: they’re demanding more from their technology, investing in building their skillsets, and finding ways to integrate it into their daily lives.

If used correctly, AI may help us offset the burden of caregiving by allowing older adults to age in place through assistive devices, smart-home fall detection and locks, and voice-activated AI assistants like Alexa and Siri. The difficulty lies in finding ways to help the most vulnerable members of our society catch up with technology moving at the speed of light (and investor demands).

While slowing down is never easy, a few questions savvy healthcare providers can ask themselves are:

- Do we need an AI-based solution, or will a more standard approach work just as well? The term AI has become a catchall encompassing agentic bots, automation, and countless other tools. Ask whether the problem you’re seeking to solve is a good fit for AI.

- Are your patients demanding AI or agentic solutions to their problems, or are they asking you to perform your core competencies better? If the answer to this question is at all unclear, or you don’t have sufficient data to answer it honestly, it might be time to have a real conversation with your stakeholders and ask yourself, “Who’s better off?” as you consider adding more technology to the mix.

- Are we prepared to handle the increased privacy and security demands of AI? While ensuring HIPAA and PII data is secure is a given in the healthcare industry, the demands of AI and who’s responsible for the intimacy it can create among users are still-developing areas that demand careful consideration.

Serving Two Masters: Equity and Privacy Through Strategic Frameworks and AI Governance

Per the Organisation for Economic Co-operation and Development (OECD) “When someone writes ‘I woke up anxious about my cardiology appointment,’ or, ‘Help me negotiate my company’s lending term sheet,’ or ‘Is my grandmother entitled to rent protection?’ they share health information, trade secrets, and family information, respectively.”

Prompts like these are usually just the beginning of conversations with chatbots that go much, much deeper and can get even more personal and revealing. How healthcare organizations handle the storage, processing, and security of these deeply personal datasets can’t be overlooked.

The exposure to both the organization and the consumer could outweigh the potential benefits to the bottom line.

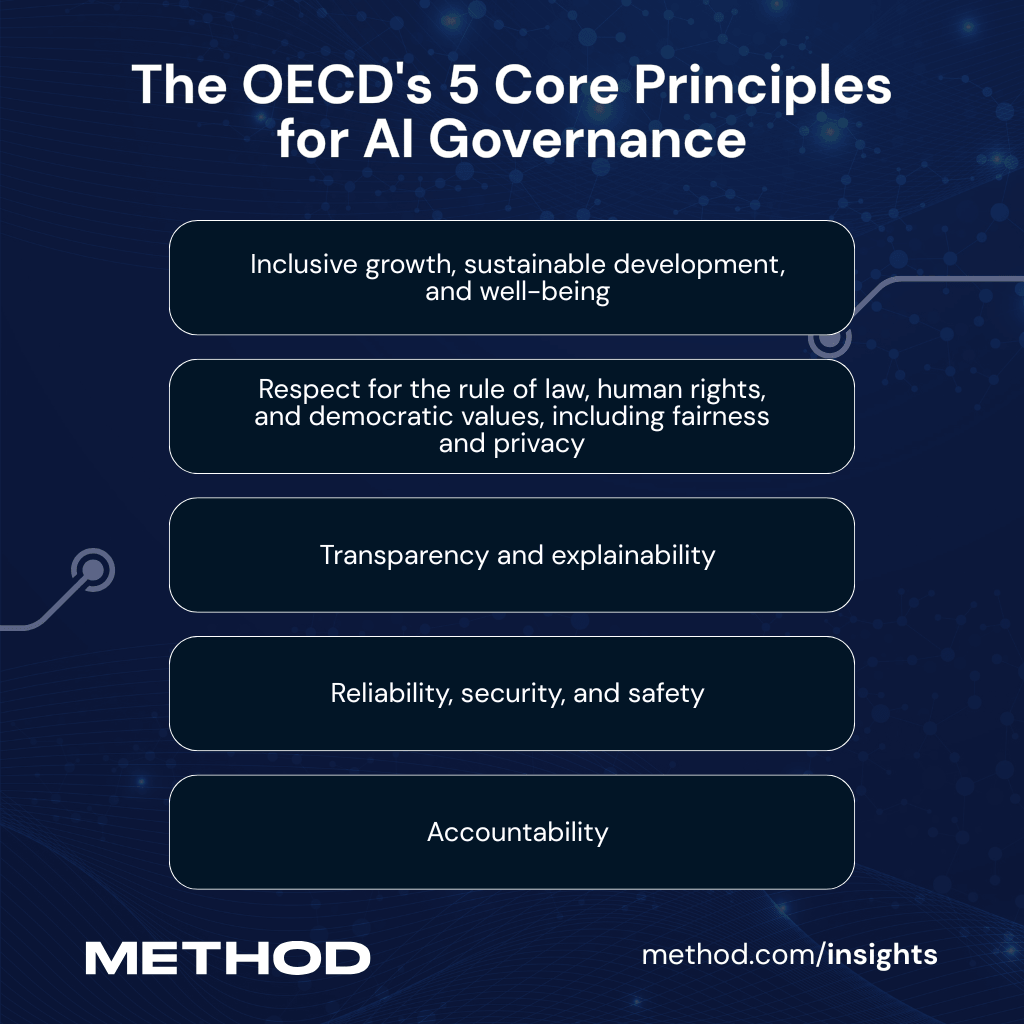

To help healthcare providers and others manage the fast-moving demands of privacy and security, the OECD released its OECD-AI Principles overview, which outlines five core principles governments and organizations can follow when considering their own AI governance frameworks.

Per the OECD, the five principles are:

- Inclusive growth, sustainable development, and well-being

- Respect for the rule of law, human rights, and democratic values, including fairness and privacy

- Transparency and explainability

- Reliability, security, and safety

- Accountability

While the OECD’s primary focus is on governmental recommendations, its guidelines can be easily adapted to meet the growing needs of AI actors across any sector. And the threats across these principles are real. The more organizations rely on agentic chatbots, say, to collect customer and client information, the more risk they invite. This risk is only magnified when held under the lens of digital literacy.

In 2023, the Institute of Electrical and Electronics Engineers (IEEE) released its updated “Effective Governance of Artificial Intelligence” position statement, which outlines its approach to building effective, safe, and ethically-minded technology solutions that begin to speak to the needs of organizations that find themselves as the stewards of a growing database of personal information. They advocate strongly for building systems that promote openness and transparency, uphold the right of appeal, and encourage organizations to continue investing in AI and AI-first infrastructure to remain competitive and stay up to date with the latest trends in both security and governance protocols.

You can learn more about their perspective on putting ethics at the center of systems-level design here.

Looking Ahead

AI and equity may seem like uneasy housemates, but the future of AI development and advancement isn’t all dark. In some instances, the solution to these pressing societal problems may be staring us in the face in the form of our own AI-backed solutions.

When applied and trained correctly, AI can provide a more intuitive digital infrastructure. By using natural language processing and voice-to-text recognition, AI-powered solutions can provide more accessible tools for customers across a range of physical and intellectual abilities.

AI-first solutions can also accelerate and simplify technological localization, providing up-to-date, accurate translations across a variety of languages with minimal customer input. The growing adoption of SLMs (small language models) is beginning to show organizations a path forward to creating more tightly trained AI models designed to handle focused datasets without all the overhead bulk of traditional LLMs (sometimes by as much as 60%, according to this report by IBM).

In turn, the patient wins by gaining access to lighter-weight and more accurate models that support offline use and better quality information and guidance, while organizations can preserve resources and protect their bottom line, allowing them to invest in the necessary infrastructure to support ethical development.

All in, the future of AI and equity rests on our shoulders. Build thoughtfully.